This year marks a major milestone for me: 10 years in the data and analytics industry. Over the past decade, I’ve had the privilege to work across various domains, lead talented teams, implement large-scale cloud architectures, and help organizations transform raw data into strategic advantage.

Effortlessly Copy Data from Zoho to Azure Data Lake Using Azure Data Factory

Are you looking for a simple way to copy data from Zoho to Azure Data Lake? You're in the right place! With Azure Data Factory (ADF), you can automate the process of copying data from Zoho’s API to Azure Data Lake Storage Gen2, making it easier to store and analyze your data.

How to Load Parquet Files from Azure Data Lake to Data Warehouse

By following these steps, you’ll be able to extract, transform, and load (ETL) your Parquet data into a structured data warehouse environment, enabling better analytics and reporting.

How to Copy Data from JSON to Parquet in Azure Data Lake

In this step-by-step guide, we’ll go through the exact process of creating Linked Services, defining datasets, and setting up a Copy Activity to seamlessly transfer your JSON data to Parquet format.

Demystifying AI vs. ML: Understanding the Key Differences

In the realm of modern technology, the terms Artificial Intelligence (AI) and Machine Learning (ML) often swirl together in conversations, creating an aura of confusion. Yet, amidst the haze, clarity emerges when we grasp the fundamental disparities between the two. AI, the embodiment of simulated human intelligence in machines, stands as the overarching concept, while Machine Learning, a subset of AI, empowers computers to learn from data and improve their performance iteratively. Understanding this dichotomy unveils the distinct roles each plays in revolutionizing industries and shaping our future.

How to Refresh the Azure Analysis Service Model Using Azure Data Factory

This blog post will help you build an automated and effortless way to keep your Azure Analysis Services model up-to-date using Azure Data Factory, ensuring accurate insights with seamless refreshment.

How to send Emails to Users using SparkPost in Azure Data Factory

A step-by-step guide on how to send emails to multiple recipients using SparkPost in Azure Data Factory.

How to Pause and Resume Dedicated SQL pool (SQL DW) using Azure Data factory.

Azure Dedicated SQL pool (SQL DW) has many benefits like Massive parallel processing (MPP) architecture to scale compute and storage resources independently, allowing for high-performance analytics but one big issue with this Azure resource is the cost associated with it. Microsoft charges Dedicated SQL Pool on Hourly basis, so it means then you must pay when the SQL DW is on even if you are not using it for development or analysis.

What are latest trends in the field of data analytics?

The field of data analytics is evolving rapidly, and new trends and technologies are emerging every month, below are the latest trends as of now: Augmented Analytics: Augmented analytics combines artificial intelligence (AI) and machine learning (ML) techniques with data analytics to automate data preparation, insight generation, and data visualization. It empowers non-technical users to... Continue Reading →

Will Microsoft Copilot change the Cloud Computing world?

Microsoft Copilot is an AI-powered code completion tool developed by Microsoft in collaboration with OpenAI that uses machine learning to provide code suggestions and completions to developers as they write code. It is designed to help developers be more productive by automating some of the more repetitive tasks of coding. It has the potential to... Continue Reading →

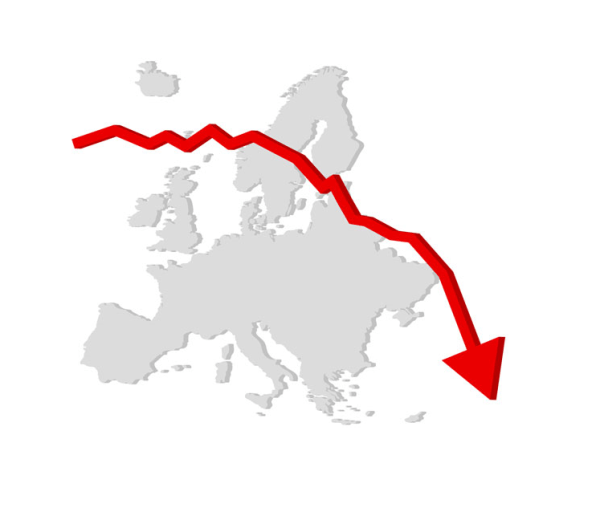

Which jobs are Recession Proof?

Recession in simple terms is a slowdown or massive decline of economic activities. A country is said to be in recession if there is a decline in activities like consumption, investment, government spending, and net export. If you are following the news, then you must be aware that countries like UK and USA are slowing moving... Continue Reading →

How to handle duplicate records while inserting data in Databricks

Have you ever faced a challenge where records keep getting duplicated when you are inserting some new data into an existing table in Databricks? If yes, then this blog is for you. Let’s start with a simple use case: Inserting parquet data from one folder in Datalake to a Delta table using Databricks. Follow the... Continue Reading →