If you’re working with Microsoft Fabric, this might save you some time.

Microsoft Fabric: Complete Guide + Common Errors and How to Fix Them (Real Production Issues)

Microsoft Fabric is a powerful platform that brings together data engineering, pipelines, lakehouse, and Power BI into a single ecosystem. However, once you move from demos to real production workloads, things start to break.

How to Refresh Power BI Reports from Azure Data Factory (ADF)

Automating your Power BI dataset refresh can save time and ensure your reports always stay up to date. In this post, we’ll walk through how to trigger a Power BI report refresh directly from Azure Data Factory (ADF) using an App Registration in Azure Entra ID.

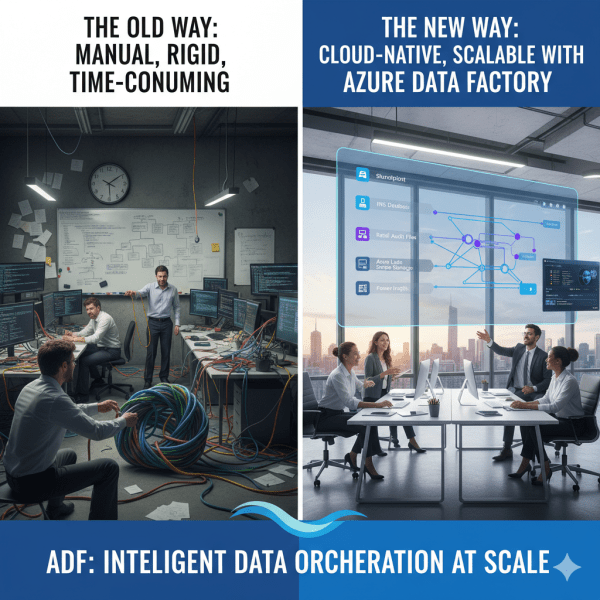

How Azure Data Factory Changed the Way We Handle ETL/ELT at Scale

There was a time when moving data from multiple sources felt like untangling a giant knot. Every data refresh meant scripts breaking, manual checks, and long hours spent ensuring everything flowed from source to destination correctly. Then Azure Data Factory (ADF) entered the picture, and it didn’t just simplify ETL and ELT. It completely transformed how we think about data orchestration at scale.

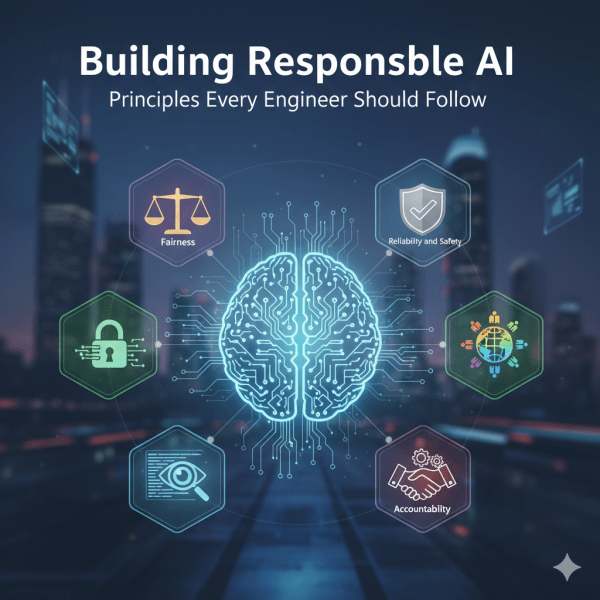

Building Responsible AI: Principles Every Engineer Should Follow

As engineers, we don’t just build systems. We shape experiences that affect people and society. AI is powered by probabilistic models trained on data, which means it can sometimes amplify biases or make mistakes that impact real lives. This is why principles of Responsible AI matter. At Microsoft, several core principles guide the responsible development and deployment of AI systems. Let’s look at them one by one.

Projects in Azure AI Foundry: Where Ideas Turn Into AI Solutions

A project in Azure AI Foundry is a workspace designed for a specific AI development effort. Each project connects to a hub, giving it access to shared resources while also providing its own dedicated environment for collaboration and experimentation.

Hubs in Azure AI Foundry: The Nerve Center of Your AI Development

A hub is the foundation of Azure AI Foundry. Think of it as a control center where all the shared resources, security settings, and configurations for your AI development live. Without at least one hub, you cannot use the full power of Foundry’s solution development capabilities.

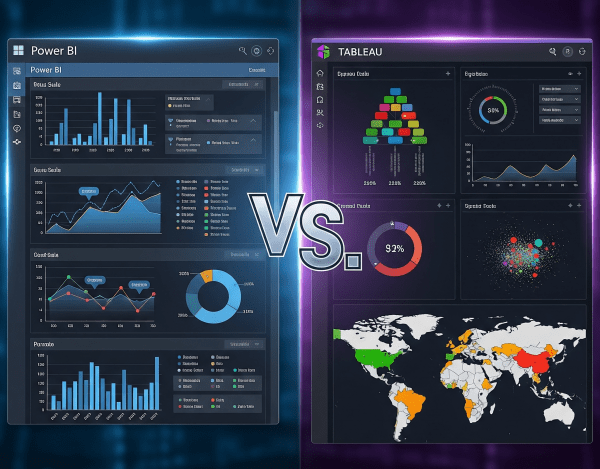

Power BI vs. Tableau: Which One Is Best?

When it comes to data visualization and business intelligence (BI), Power BI and Tableau are two of the most popular platforms in the world. Both turn raw data into insights, but they differ in cost, ecosystem fit, and flexibility.

Data Lake vs. Data Warehouse: When to Use Which?

When organizations talk about becoming data-driven, the debate often comes down to where should data live and how should it be structured? That’s where the Data Lake and the Data Warehouse come into play. Both are critical, but their purposes and strengths differ.

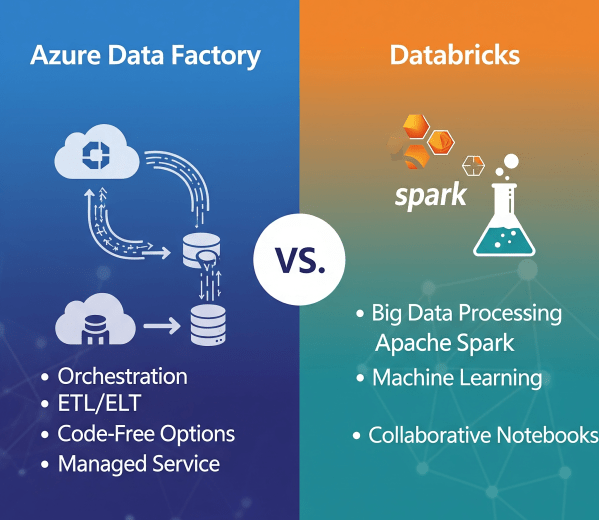

Azure Data Factory vs. Databricks: When to Use What?

In today’s cloud-first world, enterprises have no shortage of data services. But when it comes to building scalable, reliable data pipelines, two names often dominate the conversation: Azure Data Factory (ADF) and Azure Databricks.

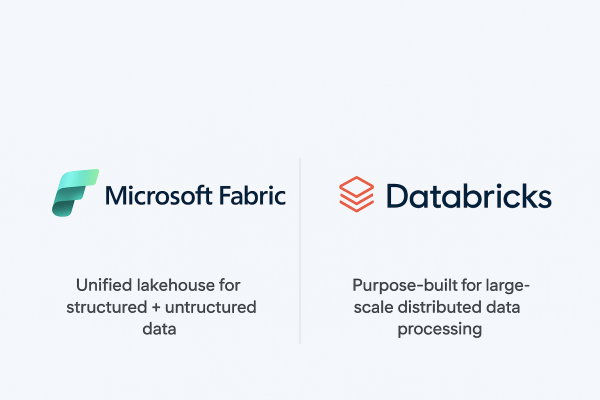

Microsoft Fabric vs. Databricks: When to Use Each?

When it comes to building a modern data platform in Azure, two technologies often spark debate: Microsoft Fabric and Databricks. Both are powerful. Both can process, transform, and analyze data. But they serve different purposes, and the smartest organizations know when to use each.

Real-Time Analytics in Microsoft Fabric

In today’s data-driven world, many business scenarios demand insights not in hours or days, but in seconds. From monitoring IoT devices to tracking live transactions, real-time analytics enables organizations to act immediately. Microsoft Fabric delivers this capability through KQL databases and event streams, making it easier to ingest, query, and analyze fast-moving data at scale.